Why PCI Will Continue to Fail

Christian Moldes has a great article up on CSIAC title “Compliant but not Secure: Why PCI-Certified Companies Are Being Breached“. This is a good read on why organizations that have achieved their PCI attestation continue to get compromised. In summary, the attestation has a lot of shortcomings ranging from improper expectations to missing controls. While I agree with many of his comments, I want to dive a bit deeper into his comments on incident response.

PCI and the South Park Underpants Gnomes

Way back in season 2, South Park did an episode titled “Gnomes” which focused on the ill-conceived business plan of some local gnomes that were trying to make money by collecting underpants. The idea is absurd, but it highlights the problems that occur when you don’t think through the full process. PCI can feel a lot like the underpants gnomes as there is a lot of focus on protection and responding to a data breach, but little to no guidance on how to identify when a protection layer has failed or how to detect that a breach has occurred.

Review Logs…?…Profit!

PCI control 10.6 defines the entirety of breach detection. In short, it identifies that logs and security events should be reviewed daily for “abnormalities or suspicious activity”. What exactly is a security event? Given that most logging systems are based on Syslog, and Syslog has no facility or severity that identifies “security events”, what exactly is someone supposed to look for? Operational and security events are mostly mixed together, and few vendors actually document the meaning of their log entries, so how is an analyst supposed to consistently identify “suspicious” events or “abnormalities” in the logs? While PCI is extremely specific when it comes to firewalls or what constitutes a proper password, it provides very little guidance on how an organization should be detecting breaches. This seems like a missed opportunity and a huge disservice to the community.

Stop Blaming the SOC Staff

This brings me back to Christian’s article. In section 3.1.4 he states:

“For example, Target confirmed that the cyber-attack vectors against their retailer’s point-of-sale (POS) systems triggered alarms and their information security team chose to ignore”

This is a pretty harsh statement as it implies the security staff was willfully negligent. As mentioned above, PCI provides very little guidance on what to look for. So it’s entirely possible that this was the first time that staff had seen these log entries. Had PCI provided guidance on what to look for, especially with POS devices, this compromise may have been detected. Further, there is no context here. Anyone that’s reviewed logs knows that the signal to noise ratio is very high. Were these the only “alerts” seen that day or were there 10,000 false positives that day for every alert that mattered? If it was the latter, the situation is less about “choosing to ignore” and more about the activity being an impossible task.

We Need New Tools

Back in the early 2000’s, I created the first log analysis class for SANS. It was while I was creating that course material that I had a revelation, log review does not scale. Sure, we try to do it, and just like the Underpants Gnomes we convince ourselves that it’s a solid business model, but the noisy nature of logs makes it far too difficult to consistently ensure that security events are always identified. In fact, I would argue that logs are better for Forensics, when a target and time window has already been identified so that much of the noise can be culled away.

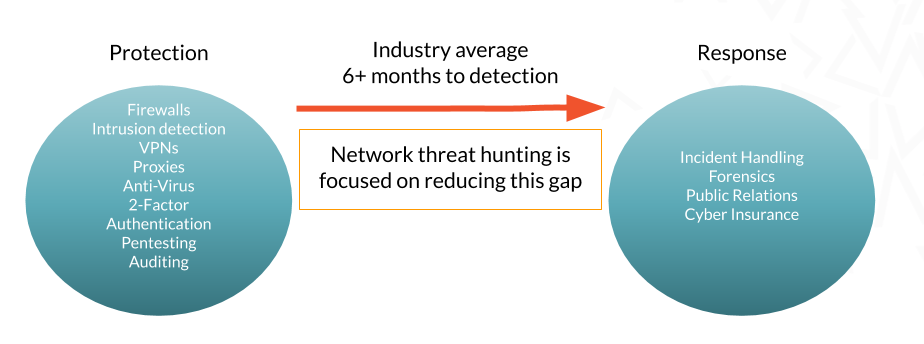

I’ve shared the infographic in Figure 1 before. As an industry, it takes it over six months to identify when a compromise has taken place. That number is actually misleading as most organizations learn of a breach from an outside third party, not through their internal detection mechanisms. This is why I have become such an advocate of network threat hunting. What we are doing today is not working. It’s time to stop collecting underpants and move on to a model that actually works.

Figure 1: Network threat hunting reduces the time between when protection technologies fail and response can be implemented.

Why PCI Needs a Threat Hunting Control

To circle back to Christian’s article again, he makes a lot of good points about the shortcomings of PCI, but I feel he misses the most obvious failing. PCI does not include any controls that validate a network as being free from compromises. Over the years we’ve seen a number of organizations that have achieved their PCI compliance while in a compromised state. There are no controls that specifically validate the network’s integrity. This feels like a huge shortcoming. There are very specific requirements around penetration testing (control 11.3) to identify points of vulnerability, but no controls to identify if those vulnerabilities have been previously exploited.

Lessons Learned

Over the years in this industry I’ve found that we jump too quickly to blaming individuals. “Bob should have…” or “Sue didn’t…”. I think we blame people because it’s the easiest way to close the issue and move on. However, what I’ve learned is that the root cause of most problems are process, not people. When a person does make a mistake, it usually traces back to a vague process or a lack of validations or controls. When it comes to accepting credit cards, PCI defines the security process and the controls we are all supposed to follow. If I can receive my attestation while I’m currently compromised, the problem is clearly not with the people. PCI needs to step up and add controls to validate a network’s integrity.

Chris has been a leader in the IT and security industry for over 20 years. He’s a published author of multiple security books and the primary author of the Cloud Security Alliance’s online training material. As a Fellow Instructor, Chris developed and delivered multiple courses for the SANS Institute. As an alumni of Y-Combinator, Chris has assisted multiple startups, helping them to improve their product security through continuous development and identifying their product market fit.